In July 2025, Anthropic announced that weekly rate limits were being introduced to Claude Code, framed as affecting less than 5% of subscribers. On March 31, 2026, the same company admitted publicly that users on paid plans were "hitting usage limits way faster than expected." Four days later, third-party coding tools were blocked from Pro and Max subscriptions; users had to either switch to metered API billing or stop using the agents they had built around the product. On April 8, 2026, "extra usage" rolled out across all Claude plans, at standard API rates for overages.

Anthropic is not alone. Inside the same April window, GitHub Copilot shifted to premium-request metering at four cents per excess request, with per-tier caps that render the $10 Pro plan insufficient for any serious agent-mode use. Cursor replaced its flat "fast request" allowance with usage-based credit pools pegged to retail API rates, and community reports documented entire annual subscriptions depleted in a single day of heavy agent work. On April 9, OpenAI introduced a $100 ChatGPT Pro tier between its existing Plus and Pro plans, bundled with a 2x usage "promotion" that expires on May 31 and reveals a real baseline of 100–500 messages per five-hour window. A week earlier, on April 2, OpenAI's Codex product moved from per-message to token-metered pricing. April has them all moving in the same direction: from flat-rate to metered, with the heavy users targeted first.

This is the subsidy phase ending, visible only if you built a workflow around the old cost assumption. The people noticing are the ones who shipped the harnesses, scripts, and agents the platform encouraged in the first place.

A caveat before proceeding. I am writing this using Anthropic's Claude Code, the product whose April repricing opens the essay. I use AI daily: research, drafting, code. The research for this piece was done with an AI assistant; the prose has been drafted and revised with one. I am not outside the trap I am describing. The essay is a report from inside the loop rather than a diagnosis from above it. That is the position from which it is worth reading.

Extraction, across more than three centuries

The subsidize-eliminate-extract cycle is older than software. Cory Doctorow gave it its modern name: "New platforms offer useful products and services at a loss, as a way to gain new users. Once users are locked in, the platform then offers access to the userbase to suppliers at a loss; once suppliers are locked in, the platform shifts surpluses to shareholders."[1] The frame is borrowed from platform economics. The mechanism is older.

England's handling of Indian textiles is the earliest instance in the research, and it is a two-stage operation rather than a single subsidy.

The first stage was coercion. India at the start of the cycle produced roughly a quarter of world textile output. The Calico Acts of 1701 and 1721 banned Indian fabrics from Britain to protect domestic producers, with punitive tariffs of 70–80% on anything that survived the ban. The East India Company then bound Indian weavers into exclusive contracts at suppressed prices, a regime former Company employee William Bolts documented in 1772 with weavers "seized and imprisoned, confined in irons, fined considerable sums of money, flogged," and silk winders in some districts cutting off their own thumbs to escape forced labour.

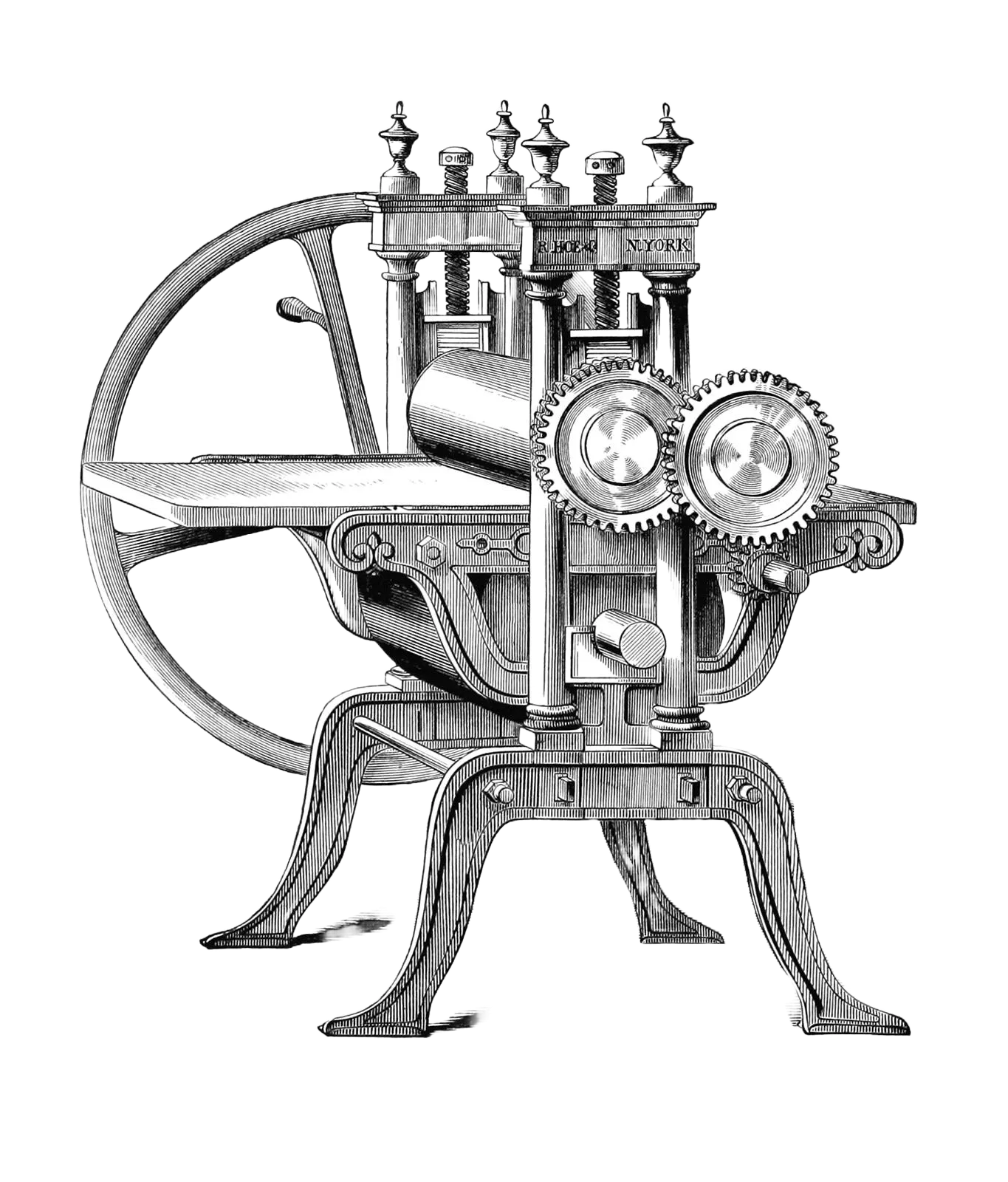

The second stage was machine-goods dumping. Once Britain's Industrial Revolution produced cheap mill-made cloth, the flow reversed: Lancashire textiles entered India at prices below the production cost of Indian handloom. British exports to India rose from a trickle to 60 million yards a year by 1830, and more than a billion yards by 1870. Indian textile exports fell 98% between 1800 and 1860. Once the competing skill base was destroyed, British prices held at monopoly margins, and India's share of world GDP collapsed from above 20% at the start of the cycle to roughly 3% by independence.

The pattern that later becomes legible as a pure subsidy trap is visible here in two-stage form: coercion did part of the work that the later subsidy-only versions would have to do on pricing alone. Standard Oil ran that end-game a century and a half later. Henry Demarest Lloyd wrote it in a sentence in 1881: "It began by paying more than cost for crude oil, and selling refined oil for less than cost. It has ended by making us pay what it pleases for kerosene."[2]

The Green Revolution was the twentieth-century agricultural instance. Hybrid seeds subsidised by aid programmes replaced saved seed across South Asia; Vandana Shiva documented how Monsanto ended up controlling 95% of India's cotton seed market and what the arithmetic looked like for farmers: "All alternatives have been destroyed." Hubert Horan has chronicled the most recent classical instance for the past decade. Uber absorbed $31.5 billion in operating losses from 2014 through mid-2023, destroyed the taxi industry in the cities it entered, and raised prices 92% in some markets once the competition was gone.

These are four. The same shape shows up across more than three centuries of cases the research catalogue documents: AT&T's cross-subsidy of local service, Amazon's below-cost Kindle and Quidsi demolition, Google Maps' 1,400% price jump the day it stopped being free, Facebook's collapse of organic reach to 1–2%, the Chinese ride-hail and community-buying wars of 2014 and 2020, MoviePass as the clean reductio.

The current instance is AI. It is structurally distinct.

Subsidised cognition is not new: forty years of cognitive debt

Every subsidy trap extracts the means to come back. Ivan Illich called this a radical monopoly: "A radical monopoly goes deeper than that of any one corporation or government. It can happen when tools are multiplied, and everyone is forced to use them."[3] That has been the mechanism since the Calico Acts. The weaver who stopped weaving could not negotiate with Lancashire once the loom was gone from the cottage. The farmer who stopped saving seed could not refuse the next year's pricing round once the indigenous varieties were lost. The cab owner who sold the medallion at $80,000 had no route back to the pre-Uber trade.

What is new in the genAI cycle is who the trap is catching.

For three centuries the subsidy traps have hit blue-collar, artisanal, and agricultural work. Weavers, artisans, small farmers, taxi owners, grocery workers. This is the first cycle where the class being swindled is the white-collar professional. The lawyer, the analyst, the designer, the software engineer, the doctor, the middle manager. The professions that were above the automation line in every previous cycle are this cycle's target. The framing of "AI as a productivity tool for knowledge work" is the same framing East India Company supplied for "cheap textiles for British households" in 1720. The social position of the beneficiary and the victim has swapped, but the shape is the same.

What is also not new is the cognitive mechanism the subsidy runs on. Automation-induced skill atrophy has been documented, costed, and institutionally responded to in aviation for forty years. The knowledge industries are encountering it for the first time, and they have not yet institutionalised anything.

Lisanne Bainbridge identified the mechanism in 1983, writing about cockpit automation: "Perhaps the final irony is that it is the most successful automated systems, with rare need for manual intervention, which may need the greatest investment in human operator training." The better the automation, the more skilled the operator needs to be during the rare intervention, and the less prepared they are for it.

Two subsequent accidents confirmed this in the most public way. On June 1, 2009, Air France 447 lost its speed sensors to ice over the Atlantic at night; the autopilot disconnected; the co-pilot in the right seat held the nose up through a three-and-a-half-minute stall, and 228 people died at the surface of the ocean. The aircraft was flyable throughout. The crew no longer was. On July 6, 2013, Asiana 214 approached San Francisco in a mode the crew did not understand was letting airspeed bleed off; the tail hit the seawall at 28 feet per second; three teenagers died. In both cases the intervention that Bainbridge had said the operator would need to be trained for, and would not be, was the intervention that was required.

The same mechanism is now measurable in knowledge work. In 2025, the Lancet Gastroenterology & Hepatology published the Budzyń study: after three months of AI-assisted colonoscopies, the adenoma detection rate of expert endoscopists performing non-AI procedures dropped from 28.4% to 22.4%. These were clinicians with 2,000+ procedures of experience. The AI did not make them better; it made them measurably worse at the underlying task, within one quarter.

Nataliya Kosmyna's MIT team ran the same experiment on essay writers the same year. Fifty-four participants, EEG measurement: the ChatGPT group showed 55% lower neural connectivity than the write-from-scratch group, and 83% of ChatGPT users could not quote a sentence from their own essay minutes after submitting it. Kosmyna coined the phrase "cognitive debt" for the short-term-performance, long-term-skill trade.

The clearest test on programmers comes from Anthropic itself. In 2026, Shen and Tamkin ran 52 developers learning a new library: the AI-using group scored 17% lower on post-task conceptual understanding. The maker's own research confirmed the pattern the platform depends on denying.

Bainbridge's 1983 paper on cockpits does not need to be updated to describe 2026 IDEs.

Switching costs, cognitive debt, and the naked king

Classical platform lock-in assumes the user is a rational actor who has calculated switching costs. The switching-costs literature, formalised by Farrell and Klemperer, treats the lock as a matter of expected future prices: "Consumers facing switching costs care about expected future prices as well as about current prices, and are therefore generally less sensitive to current prices."

The genAI subsidy adds two variables that the classical model does not carry. The first is that the user's capacity to evaluate the switch has been offloaded to the tool they are considering switching away from. If people are using Claude to understand Anthropic's pricing moves, they will hit a (pay)wall at some point.

The second is social.

People will accept being wrong with everyone over being right alone. If the whole profession uses the assistant and the profession's output is 7% worse than it was in 2022, nobody individually loses standing; the baseline drifted. If one person refuses the assistant and ships 20% slower, that person loses standing immediately, inside a quarter. Accuracy across a profession is hard to measure and slow to adjudicate; velocity is easy to measure and punished weekly. The naked-king equilibrium holds in any group where the cost of dissent is high and the cost of conformity is distributed. Long before the subsidy actually ends, it has become unremarkable to ship a paragraph, a function, or a contract drafted by the assistant, and remarkable to ship one drafted by hand. The social cost of the second move is the lock the classical switching-cost literature did not need to model, because pre-digital professions had public, inspectable output and the conformity discount was smaller.

This is what Bernard Stiegler called the third stage of proletarianisation: "In the nineteenth century, we saw the loss of savoir-faire, and in the twentieth the loss of savoir-vivre. In the twenty-first century, we are witnessing the dawn of the age of the loss of savoirs théoriques."[4] The lost knowledge is the capacity to judge when an output is wrong, when a source is shaky, when a suggestion is load-bearing versus decorative. It is the meta-skill. Lose it and you can still produce, but you cannot evaluate what you produced, the capacity traced earlier in Taste is in the Bookmarks.

The closest corporate admission of the same shape comes from Boeing. In 2001, senior technical fellow L.J. Hart-Smith wrote internally about the 787 outsourcing strategy: "Outsourcing all of the value-added work is tantamount to outsourcing all of the profits… the day would eventually come when there wouldn't be enough in-house capability to even write the specs." Ten years later, in-house capability had decayed, the 787 programme cost $32 billion against a $5 billion original budget, and CEO Jim Albaugh conceded: "We spent a lot more money in trying to recover than we ever would have spent if we'd tried to keep the key technologies closer to home."

The concession did not produce a course correction. It produced the 737 MAX. Rather than certify a new airframe after restoring the in-house capability Hart-Smith had warned about losing, Boeing added MCAS, a flight-control software patch meant to make the new engines behave like the old ones to avoid pilot re-training. Southwest's existing order was protected; the cost line held; the capability was not rebuilt. Lion Air 610 went into the Java Sea on October 29, 2018. Ethiopian 302 went into the ground near Bishoftu on March 10, 2019. Three hundred and forty-six people died in five months. A congressional investigation and a Department of Justice deferred prosecution agreement followed. The capability Hart-Smith had told them they would lose was the capability that would have caught MCAS before it shipped. They had optimised the margin and lost the product that the margin was supposed to be on.

Absorptive capacity, in the sense Cohen and Levinthal gave it, is not recoverable on command. The subsidy phase builds a dependency that looks like productivity; the extraction phase prices the dependency; the user notices, at the moment of the price increase, that the skill needed to negotiate or switch is precisely the skill the tool has been quietly doing in their place. The dependency migrated from skill-in-head to paid API access.

The Max 20x user reading the April 8 note is inside this loop. Their harness was built during the subsidy; the overage is the extraction; the ability to decide whether the overage is reasonable is the skill the harness was designed to replace.

Why firms keep buying it

You could call this a personal problem if firms were protecting the skill. They aren't. Training a junior costs a firm real money now; the senior that junior becomes in five years may go work somewhere else. Every firm faces the same calculation, and the AI subsidy makes skipping the training line up with the quarterly target. An economist at IESE, Enrique Ide, ran the numbers in 2025: a 30% automation rate on entry-level tasks produces a 20% reduction in total output over the following century. No single firm is responsible. The damage shows up at the scale of the profession, over a generation.

This is the organisational analogue of the individual lock. Matt Beane's five-site ethnography of robotic versus traditional surgery documented the concrete form. Traditional surgery put the attending surgeon and the resident side by side at the patient; the attending physically guided the resident's hands through each step of the procedure, ceding more of the operation as the resident gained competence. The robotic surgical console has one seat. The resident watches on a screen and cannot take the instruments during a live case. The formal replacement pathway is four hours of simulator time per year, which does not scale at the intensity a surgical career requires. The top residents found their own practice time through other means: Beane measured them at roughly 300 hours per year against the 4 officially required. He calls this coping behaviour shadow learning, and he has observed the same geometry across more than 30 professions where a tool has displaced an apprenticeship.

A firm that skips training moves the cost onto someone else. Shadow learning is the employee paying the bill in evenings and weekends. The output collapse Ide modelled is society paying the bill a generation later, in trained seniors who were never produced. The firm books real savings in quarter one; the costs are real too, and they sit on somebody else's ledger.

Not every firm form has this problem. Small bootstrapped teams that already price continuity and retention into their cost model, the tech ateliers, are the firms least exposed to this externality. They keep training because they cannot afford to lose the people they train. See Tech Ateliers, Not Big Tech for the earlier version of that argument.

The coalition and the counter-coalition

The April window is not four CEOs independently noticing the same thing. The frontier labs, the venture capital stack behind them, the hyperscalers whose inference substrate they rent, and the enterprise buyers whose CIOs count the training-line savings form a single interest bloc. The synchronised repricing is what a coordinated extraction phase looks like when it reaches the end of its runway. The moment the price moves, the coalition behind the pricing is visible.

The counter-coalition exists but is not yet organised. The professional guilds, the bar associations, the medical specialty boards, the chartered engineering institutes, the accounting bodies, hold the formal authority to mandate continued competence. They have not claimed skill-floor as a platform. European states hold the regulatory tools and have begun experimenting (Mistral, Hugging Face, sovereign AI strategy) without turning those moves into a coherent position. Mittelstand-shaped firms have the cost model that naturally resists the extraction clock. Juniors, the cohort Beane documented as paying shadow-learning's bill, have the grievance but not the voice.

None of these actors has yet claimed the four counter-moves that follow as parts of a single political platform. The moves are listed below as parallel policy levers. They are, in fact, a platform, and the coalition that names it first wins the legitimacy fight.

What works: digital sovereignty, local inference, and the small-firm form

"Technology is not a destiny but a scene of struggle," Andrew Feenberg wrote.[5] Four structural moves hold up to scrutiny. None of them is a personal lifehack.

The first is institutional mandatory non-AI practice. The FAA issued SAFO 13002 in 2013 and AC 120-123 in 2022 requiring manual flying practice despite available automation. The USS Essex in 2022 navigated 1,800 nautical miles from Hawaii to San Diego by sextant alone, arriving on course after six days with an average deviation of two nautical miles. The US Army found 50% of 914 soldiers failing land navigation after a GPS-era training gap. Aviation and the military have institutional price signals, lives and kit, that force them to maintain a skill floor independent of the tool. Knowledge work does not. The live question is whether any knowledge profession manufactures such a floor before its training pipeline collapses.

The second is publicly-oriented infrastructure at the inference layer. The substrate forms around open-weight ecosystems more than national champions. Hugging Face hosts roughly 135,000 GGUF-formatted open-weight models, providing a neutral substrate for frontier model distribution. Mistral's Apache-licensed releases (Ministral, Codestral Mamba) add convivial weights to the pool, even as its flagship commercial models remain closed behind an API that mirrors the American pattern. Public funding helps seed this infrastructure but does not in itself make a model convivial; the licence and the weights do. Treating inference the way the EU has treated spectrum and rail, as a substrate on which multiple operators compete rather than as a private rent position, is the policy move that would matter. Whether this becomes the European position is unresolved; the argument is that it should.

The third is ownership at the personal and organisational level. Ollama registered 52 million monthly downloads in Q1 2026, up roughly 520× from the 100,000 of Q1 2023. Local inference on a Mac Studio M4 Max is a real choice for coding work, not a principled gesture. The right-to-repair frame ported into cognition: let it break, see if you own it.

The fourth is the small-firm form. The KfW Mittelstandspanel reports 3.87 million German SMEs producing roughly 50% of GDP, 96.9% of exporters, with 2.7% employee turnover against the 20%+ typical of blitzscaled firms. Whether the Mittelstand actually resists AI subsidy capture over the current cycle is too early to tell. The structural argument is that its cost model already prices training, continuity, and retention, and that the subsidy's operating logic of growth-you-cannot-refuse has less purchase on firms that do not optimise for growth in the first place. This is the Digital-Mittelstand thesis, carried forward into a cycle that will test it.

The four share a price signal the subsidy does not set.

Or: the trap closes too early

The four previous cycles closed slowly. East India Company spent the better part of a century displacing Indian textiles before British consumer prices registered the extraction. Standard Oil had two decades of rebates before Lloyd wrote the 1881 sentence. Uber burned capital for ten years before the Rakuten index documented a 92% price rise. Each cycle gave the dependency time to metabolise. The boiling-frog metaphor, used correctly, describes pacing.

The genAI subsidy may not have the pacing.

The cognitive-atrophy numbers are real, and they are young. Budzyń measured six percentage points in three months. Kosmyna measured EEG effects across a single essay-writing session. Shen and Tamkin's developers lost 17% of conceptual understanding in a study that lasted hours. These are short-horizon measurements of early dependency, not decade-long studies of a generation that never learned the underlying craft. Most people using Claude Code in 2026 were shipping software without it in 2020. The skill is still there, underneath the subsidy.

Inside that window, the providers are repricing. What April 2026 is teaching the attentive user is that the flat rate was never the product, the subsidy was always a ramp toward metering, the heavy user is the target rather than the ally. The extraction attempt is itself an educational moment, arriving before the cognitive lock has snapped shut. The providers are teaching us, too early, that they cannot be trusted.

The substitute infrastructure already exists. The open-weight and local-inference options named above are sub-par at the frontier but perfectly serviceable for most daily tasks. Closing the ChatGPT tab and running Qwen2.5-Coder or DeepSeek-Coder locally is not an act of heroism. It is what these jobs looked like eighteen months ago, for the same professionals doing the same work.

The 2x "promotions" bundled with the April repricing are attempts to buy the trust back through temporary boosts. Whether that works depends on whether the user has already seen the mechanism. The previous four cycles had time on their side. This one may not.

The margin recomposition and the invention

Every new technology brings two things at once: capabilities that did not exist before, and a rebundling of the value chain that redistributes the profit margin. In the earlier four cycles, the invention came first and the margin capture followed. Lancashire built real mechanised textiles. Standard Oil built real refinery scale. Monsanto-era agriculture built real yield. Uber built real dispatch logistics. The extraction was predatory, but it arrived on top of a genuine new thing, and the new thing was, in the end, available to the world at a different price.

The genAI subsidy is speedrunning the margin recomposition before the new things have been invented.

The AI-native products of 2030 do not exist yet. The agents, the autonomous workflows, the domain-specific systems that will be the actual productive output of this technological wave are still under construction. MIT's GenAI Divide: State of AI in Business 2025 audit of enterprise deployments found that 95% of corporate GenAI pilots produced no measurable revenue or profit improvement, against roughly $30 to $40 billion of enterprise investment. The pricing and the lock-in of those systems, however, are already being set, in April 2026, by incumbents whose business model requires the margin to be booked before the substrate has finished cooling. If the extraction wins the race, the bill arrives for products that are still mostly vapourware, paid by professionals whose cognitive capacity to evaluate whether the bill is reasonable has been quietly absorbed by the product being billed for.

The test the reader can run is small and personal. Write a paragraph without the assistant. Debug a function without the completion. Trace a claim to the primary source without asking the model to summarise it. If the gap between what you produce under the subsidy and what you produce without it has widened over the last year, the subsidy has been working as designed.

The test at the industry level is structural: whether the margin recomposition happening now leaves any oxygen for the inventions it was supposed to pay for. The actors who can change the pacing, regulators, the guild boards that could mandate non-AI practice, and the enterprise buyers who could refuse the April 2026 overage terms, have not yet acted as a coalition. If they do not, the race plays out on its current schedule, and we get the bill without the nice new things.

The Max 20x user reading the April 8 note still has the skill. The moat is whether you kept it. The question above it is whether we keep the room for anything new to be built at all.

Cory Doctorow, Enshittification, Farrar Straus and Giroux, October 2025. The verbatim passage is from Doctorow's Financial Times op-ed "'Enshittification' is coming for absolutely everything," February 8, 2024. ↩︎

Henry Demarest Lloyd, "The Story of a Great Monopoly," The Atlantic, March 1881. ↩︎

Ivan Illich, Tools for Conviviality, Harper & Row, 1973, ch. 3. ↩︎

Bernard Stiegler, Nanjing Lectures 2016–2019, Open Humanities Press, 2020. Open-access PDF: OAPEN. Three-stage proletarianisation framework developed across For a New Critique of Political Economy (2010) and the Technics and Time series, 1994–2011. ↩︎

Andrew Feenberg, Questioning Technology, Routledge, 1999. Full text PDF: SFU. ↩︎