In August 2023, a security researcher noticed that Zoom had quietly updated its terms of service. Section 10.4 now granted the company a "perpetual, worldwide, royalty-free, sublicensable" license to all customer content for purposes including "machine learning, artificial intelligence, training." The investigative outlet Bellingcat dropped its Zoom Pro subscription. Within 48 hours, CEO Eric Yuan walked the terms back, calling it a "process failure."

Sixteen months later, on January 4, 2025, Gravy Analytics discovered that someone had breached its AWS cloud environment. Gravy processes 17 billion smartphone signals per day from approximately one billion devices worldwide. A hacker posted sample data on a Russian cybercrime forum: over 30 million location data points, harvested from apps including Tinder, Grindr, Candy Crush, Microsoft 365, Muslim prayer apps, and pregnancy trackers. Security researchers found tracked devices at the White House, the Kremlin, and the Vatican. Most of the app developers whose products funneled data to Gravy did not know it was happening. The data entered through real-time advertising bid auctions, intercepted in the milliseconds between a page loading and an ad appearing.

Two stories among dozens with the same patterns.

Zoom's code is sealed. Its algorithms are proprietary. Its data flows are invisible. We cannot see into the tool. Gravy's pipeline was invisible too, to the billion people whose phones it tracked every day. But their lives were transparent down to the building they slept in.

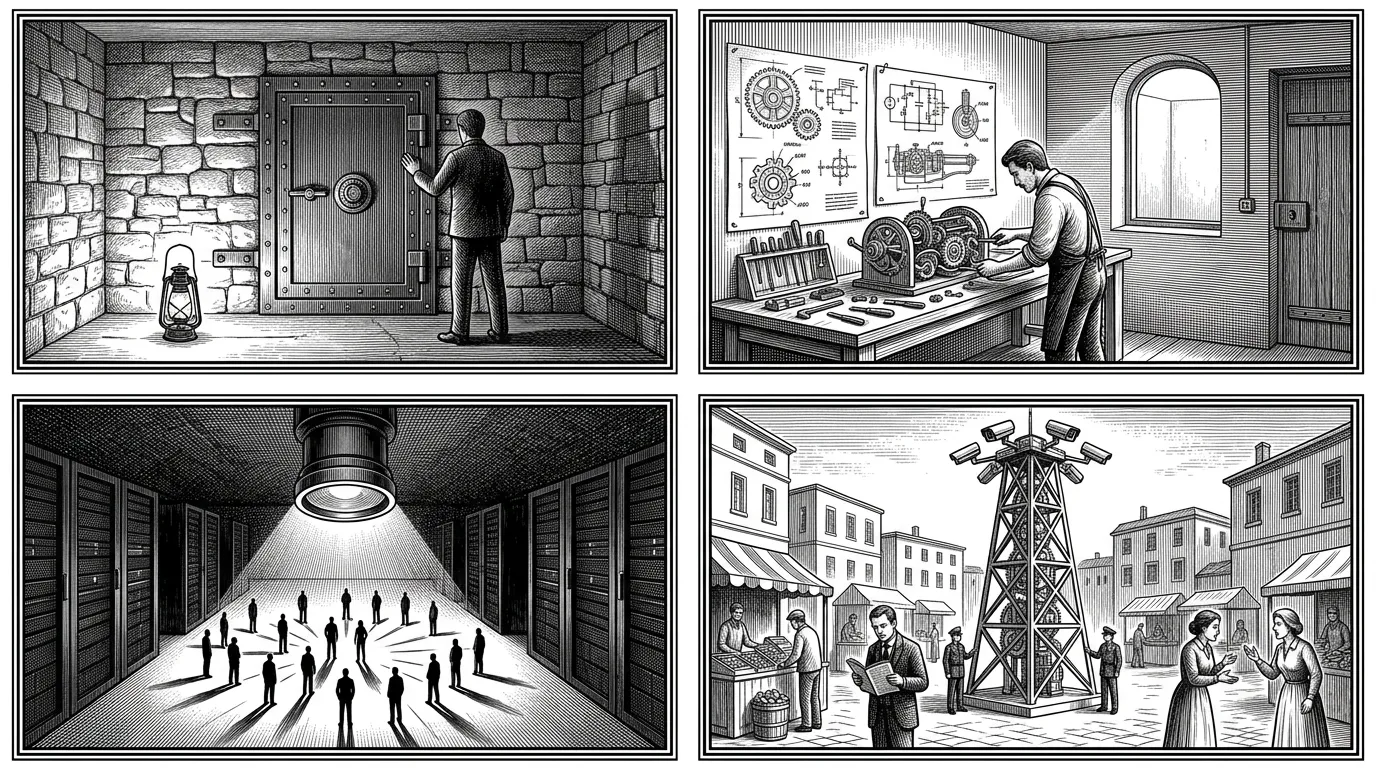

We want to see into our tools. We do not want our tools to see into us. The current regime has it exactly backwards.

A previous article introduced the first axis: transparent technologies, tools we can understand, inspect, and repair. That axis is necessary but incomplete. A tool can be perfectly transparent and still surveil you. This article adds the second axis: opaque lives. The two together form the polarity we need.

Two transparencies, one word

We use the word "transparency" for two radically different things. The conflation is the source of the paradox.

Tool transparency is the quality philosopher Ivan Illich called conviviality: technologies designed so that users can understand, direct, and repair them.[1] The Framework laptop with its published schematics and 10/10 iFixit repairability score. A Rails monolith whose architecture is visible to the team that maintains it. The bicycle you can fix with a screwdriver. Transparency here means the user sees into the machine.

Life transparency is what sociologist Shoshana Zuboff named surveillance capitalism: an economic logic that claims human experience as raw material for behavioral prediction.[2] The data broker holding 11,000 data points on every American consumer. The advertising network tracking your GPS position to sub-10-meter precision. The terms-of-service update that silently claims your conversations as training data. Transparency here means the machine sees into the user.

These two transparencies pull in opposite directions. The first expands autonomy. The second destroys it. Treating them as a single value, in the same sentence, in the same policy debate, is how the current regime sustains itself.

The relationship forms a matrix:

| Opaque tool | Transparent tool | |

|---|---|---|

| Opaque life | Old-school privacy (incomplete) | The goal |

| Transparent life | Current Big Tech | Surveillance trap (worst) |

Most of Big Tech occupies the bottom-left quadrant: opaque tools (you cannot read the algorithm), transparent lives (the algorithm reads you). The bottom-right quadrant is worse: add open tools to the surveillance apparatus. An open-source facial recognition system deployed by an authoritarian state. Tools and lives both visible, nobody protected.

The top-left is where privacy advocates often stop: reject the tool entirely, go off-grid. But withdrawal cedes the field to extractive systems and leaves no alternative for the billions who need digital infrastructure to participate in public life.

The goal is top-right. Transparent tools, opaque lives. You can see into the machine. It cannot see into you. Signal, with its audited open-source protocol and sealed-sender metadata protection, sits here. So does a self-hosted Nextcloud instance running on European servers, with your documents under your control and no behavioral data leaving the box.

The matrix is the test. For every tool, every platform, every system we adopt or build: which quadrant does it occupy?

The machinery of the wrong quadrant

The bottom-left quadrant is not an accident. It is a $600-billion-per-year industry.

The global data brokerage market reached an estimated $300 billion in 2024. Acxiom maintains profiles on 260 million individuals across 190 million American households, with over 11,000 data points per person. The Financial Times benchmarked the price of a basic demographic profile at half a thousandth of a dollar. Lists of people with specific health conditions (anorexia, substance abuse, depression) sell for $79 per 1,000 names. Amazon's Shopper Panel pays consumers $10 per month for receipt data that brokers resell at fractions of a cent per person: a markup of roughly 10,000x.

Between 2023 and 2025, the major platforms attempted a coordinated land grab on user content for AI training:

| Company | What they tried | What happened |

|---|---|---|

| Zoom (2023) | "Perpetual, worldwide" license for AI training | Walked back in 48 hours |

| Adobe (2024) | Mandatory TOS claiming derivative rights for ML | Artist tweet hit 5M views; president overhauled terms |

| Meta (2024) | AI training on EU public posts, opt-out only | noyb filed complaints in 11 countries; DPC forced pause |

| LinkedIn (2024) | AI training toggle enabled by default | Class action filed; EU data excluded |

The pattern: claim content retroactively, face backlash, retreat partially, keep whatever stuck. The question shifted from "will my data be sold to advertisers?" to "will my conversations train systems I cannot inspect?"

Critical theorist Andrew Feenberg explains how these defaults become invisible.[3] The decisions embedded in code, in toggle states, in terms of service are political choices designed to look like neutral engineering. When LinkedIn defaults Premium users into AI training and buries the opt-out three menus deep, that is a standpoint expressed at the level of design. The opacity is the feature.

The apparatus extends beyond advertising into government surveillance. Between 2019 and 2020, US Customs and Border Protection conducted over 55,000 queries on commercially purchased smartphone location data. In one three-day period in 2018, CBP obtained 113,654 location points from a single area in the southwestern United States. The FBI signed a contract worth up to $27 million with data broker Babel Street for 5,000 licenses to its location-tracking tool. The Office of the Director of National Intelligence's own declassified report admitted what this means: the intelligence community "does not even know" which of its agencies are buying what data, or how much they are collecting.

In the European Parliament, 890 full-time Big Tech lobbyists outnumber the 720 MEPs they target. The tech industry's EU lobbying bill: €151 million per year, up 56% since 2021. The wrong quadrant is not a market failure. It is a market working as designed.

The correct polarity already exists

The top-right quadrant is not theoretical. A growing ecosystem of tools achieves the correct polarity: transparent to the user, opaque to surveillance.

| Layer | Tool | Transparent how | Opaque how |

|---|---|---|---|

| Hardware | Framework Laptop | Published schematics, 10/10 iFixit | No telemetry requirement |

| Communication | Signal | Open-source protocol, audited | Sealed sender, minimal metadata |

| Productivity | Nextcloud | Open source, self-hostable | 300K+ German federal gov users |

| Infrastructure | 37signals | Full cloud exit, public accounting | No third-party data dependency |

| Protocol | Matrix | Federated, multiple implementations | France: Tchap for all civil servants |

These are not niche curiosities. In August 2025, French Prime Minister Bayrou issued a circular mandating Tchap, built on the Matrix protocol, for all government communications. The circular bans WhatsApp, Signal, and Telegram for civil servants, effective September 1. DINUM joined the Matrix.org Foundation as its first government Silver Member. Germany's Schleswig-Holstein replaced Microsoft across 25,000 workstations with LibreOffice and Linux. Its digital minister framed the decision explicitly as digital sovereignty: "State sovereignty today is no longer determined solely by military strength, but by the ability to steer, control and further develop digital systems." 37signals documented their cloud exit in real time: a $3.2 million annual AWS bill replaced by owned hardware, projecting over $10 million in savings across five years.

Philosopher Helen Nissenbaum provides the framework for why these tools satisfy users in a way that "privacy settings" never do.[4] People do not want to block all information flow. They want information to flow appropriately: to its intended recipient, for its intended purpose, under norms they understand. A self-hosted Nextcloud instance achieves this. You know what the tool does (you can read the code). The tool does not profile you (it collects no behavioral surplus). Information flows appropriately because the architecture makes inappropriate flows structurally impossible.

Honesty about scale: Signal has 70 to 100 million monthly active users. WhatsApp has three billion. The ratio is roughly 30:1. Framework and Fairphone have sold roughly two million devices combined. Samsung ships 259 million per year. A Security.org experiment found that 98% of respondents agreed to a consent form granting the researchers naming rights to their firstborn child, including every participant who claimed to read agreements thoroughly. Only 15% of cookie consent banners across the EU meet minimum GDPR compliance.

The correct polarity exists, works, and is growing. European governments mandating sovereign tools, regulatory pressure through the Digital Markets Act and the AI Act, and institutional procurement at scale are creating the sustained revenue that moves these tools from the enthusiast tier to the mainstream. The question is speed, not viability.

What the pre-moderns already built

The top-right quadrant is not a modern invention. Four traditions, separated by centuries and continents, independently designed for the same principle: public tools and spaces remain legible; private lives remain shielded.

The Talmudic doctrine of hezek re'iyah holds that unwanted observation is a form of legally cognizable damage. The Mishnah specifies: "A person should not open windows that face a shared courtyard." The Ramban (Nachmanides) ruled that this harm is real and substantive, "similar to physical bodily damage," and that no prescriptive rights can ever be acquired for gazing. Even decades of looking do not create a right to look. Rabbi Ari Hart applied this directly to the digital age: "According to this Jewish opinion, the NSA gathering data about you, or for that matter Facebook, Google or Amazon, might be harmful to you even if nothing sinister is done with it."

The traditional Islamic house translated a similar principle into architecture. The Quran commands: "Do not enter houses other than your own houses until you ascertain welcome." Exterior walls reveal nothing to the street. All beauty is directed inward toward elaborate courtyards. The mashrabiya, a projecting wooden lattice screen, functions as a technology of asymmetric visibility: occupants see out while outsiders cannot see in. The entire built form of the traditional Islamic city embodies the polarity. Public infrastructure (markets, mosques, courts) remained open. Domestic life was architecturally shielded.

The Carthusian monastic order took productive privacy to its logical conclusion. Each cell is a self-contained hermitage. Food arrives through a revolving compartment called a "turn" that delivers necessities without visual contact between people. "The solitude of the Carthusian is ensured and protected by three concentric circles: the desert, the enclosure, and the cell."

Jean-Pierre Claris de Florian gave the principle its most quoted form in 1792. In Le Grillon (The Cricket), a cricket hidden in flowering grass envies a butterfly's brilliant visibility. Children arrive, chase the butterfly, seize it, and tear it apart. The cricket draws the lesson: "Pour vivre heureux, vivons cachés." To live happily, live hidden.

Hannah Arendt explained why this matters for everyone, not only crickets and monks.[5] A life spent entirely in public becomes shallow. It loses "the quality of rising into sight from some darker ground which must remain hidden." Depth requires hiddenness. When that ground is exposed to continuous observation, the result is conformism, self-censorship, and the steady erosion of the capacity for genuine thought. Privacy is not about having something to hide. It is about having somewhere to be.

A caveat. The mashrabiya shielded domestic life, but in practice it also enforced women's seclusion. Talmudic courtyard law protected households headed by men. The structural insight transfers; the social hierarchy does not. What we take from these precedents is the architectural principle (unwanted visibility is damage, deliberate opacity is protection), not the power relations it served.

Talmudic building codes, Islamic lattice screens, Carthusian revolving compartments, a French fable about a cricket. Four civilizations, one structural insight that survives its context: the deliberate construction of opacity is a technology of protection.

Back to Zoom and Gravy Analytics.

Zoom walked back its terms in 48 hours. The FTC issued an enforcement order against Gravy Analytics in January 2025. Both events showed the polarity can be contested.

But the machinery persists. Seventeen billion signals per day from one billion devices is not undone by a policy reversal. The $600 billion advertising market does not dismantle itself because one data broker got caught. The 890 lobbyists in Brussels did not go home.

Let it break. See if you own it. asked one question: can you see into your tools? This article asks the flip side: can your tools see into you? A tool that is transparent to you and opaque about you is a convivial tool. A tool that is opaque to you and transparent about you is a surveillance instrument. We have been calling both "technology" as though they were the same thing.

The old civilizations knew the difference. They built walls, lattice screens, revolving compartments, and legal doctrines to maintain it. They understood that the quality of public life depends on the depth of private life, and that depth requires hiddenness.

Transparent tools. Opaque lives. The pre-moderns built walls for this. We need to build software for it.

Pour vivre heureux, vivons cachés.

Ivan Illich, Tools for Conviviality (1973): "A convivial tool can be easily used, by anybody, as often or as seldom as desired, for the accomplishment of a purpose chosen by the user." ↩︎

Shoshana Zuboff, The Age of Surveillance Capitalism (2019): "Surveillance capitalism unilaterally claims human experience as free raw material for translation into behavioral data." ↩︎

Andrew Feenberg, Transforming Technology (2002): "The technical code expresses the 'standpoint' of the dominant social groups at the level of design and engineering." ↩︎

Helen Nissenbaum, Privacy in Context (2009): "What people care most about is not simply restricting the flow of information but ensuring that it flows appropriately." ↩︎

Hannah Arendt, The Human Condition (1958): "A life spent entirely in public, in the presence of others, becomes, as we would say, shallow. While it retains its visibility, it loses the quality of rising into sight from some darker ground which must remain hidden if it is not to lose its depth in a very real, non-subjective sense." ↩︎